IBM Cloud offers IBM–managed VMware Cloud Director through its VMware Solutions Shared offering. This offering is currently available in IBM Cloud’s Dallas and Frankfurt multi-zone regions, enabling you to deploy VMware virtual machines across three availability zones in those regions.

IBM Cloud also offers a virtual private cloud (VPC) for deployment of virtual machine and container workloads. Although VMware Cloud Director is operated in IBM Cloud’s “classic infrastructure,” it is still possible to interconnect your Cloud Director workload with your VPC workload using private network endpoints (PNEs) that are visible to your VPC.

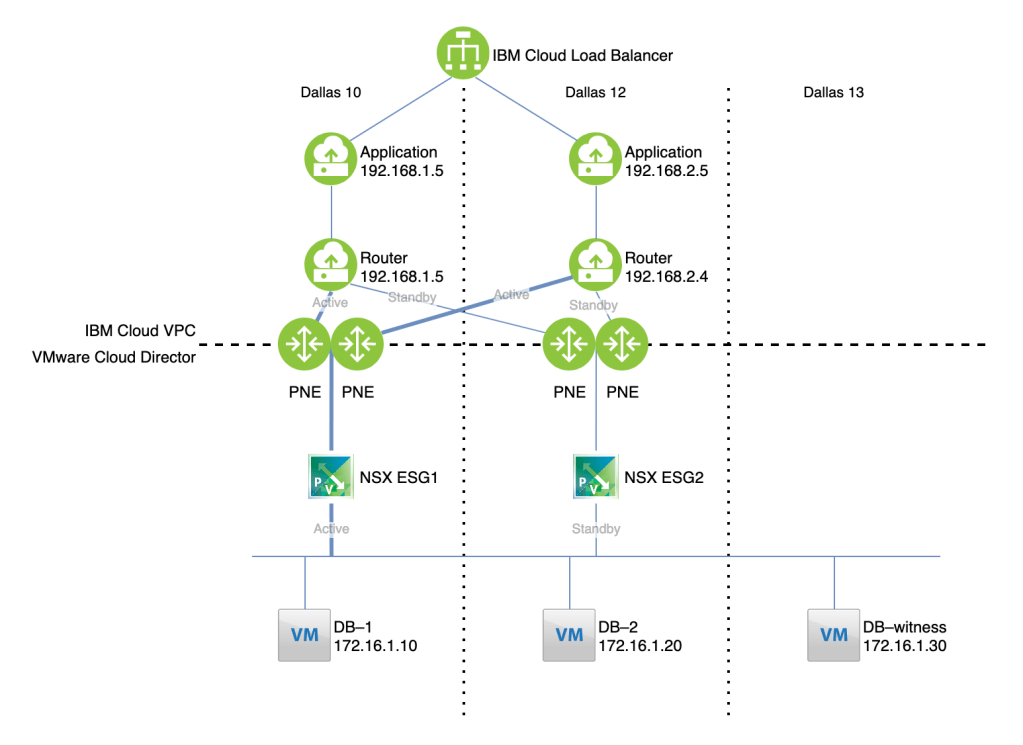

In this article we’ll discuss how to implement this solution. This solution allows for bidirectional connectivity, but for illustrative purposes consider the use case of hosting an application in IBM Cloud VPC and a database in VMware Cloud Director:

Reviewing this topology from the top down:

- Incoming traffic is handled by an IBM Cloud Load Balancer

- The load balancer distributes connections to applications running on virtual server instances (VSIs) in our example, or optionally to kubernetes services. The application is deployed in two zones for high availability.

- Each zone in the VPC has a router that will tunnel traffic to and from Cloud Director using BGP over IPsec. For the purposes of this exercise we used a RedHat Enterprise Linux 8 VSI, but you could deploy virtual gateway appliances from a vendor of your choice.

- The VPC routers connect over the private IBM Cloud network through private network endpoints (PNEs) to edge appliances in Cloud Director.

- The Cloud Director workload is distributed across three virtual datacenters (VDCs), one in each availability zone. Two edge services gateways (ESGs), one in each of two zones, serve as the ingress and egress points. These operate in active–standby state so that a stateful firewall can be used.

- The database is deployed across three zones for high availability.

Caveats

The solution described here uses the IBM Cloud private network. This is a nice feature of the solution, but for reasons that may not be initially obvious, it is also required at the moment. If you wish to connect a single availability zone between VCD and VPC, you could do so using a public VPN connection between your VCD edge and the IBM Cloud VPC VPN gateway service. However, the VPC VPN service currently does not support BGP peering, so it is not possible to create a highly available connection that is able to failover to a different VCD edge endpoint.

Also, the solution outlined here deploys only a single router device in each VPC zone. For high availability, you likely want to deploy multiple virtual router appliances, and for routing purposes share a virtual IP address which you reserve in your VPC subnet. At this time, IBM Cloud VPC does not support multicast or protocols other than ICMP, TCP, and UDP. These limitations exclude protocols like HSRP and VRRP; you should ensure that your router’s approach to HA is able to operate using unicast ICMP, TCP, or UDP.

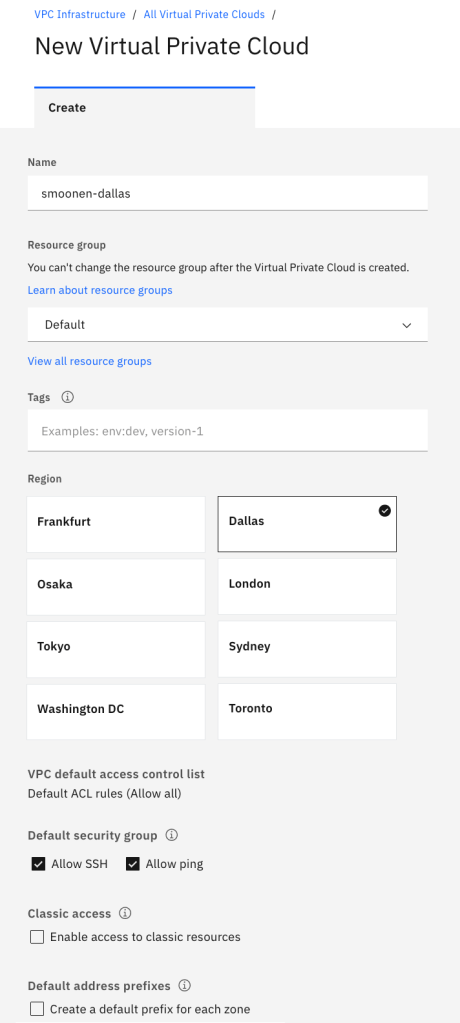

Deploy your VPC resources

Create a VPC in Dallas or Frankfurt. The VPC will automatically generate address prefixes and subnets for you; I recommend you de-select “Create a default prefix for each zone” so that you can choose your own later:

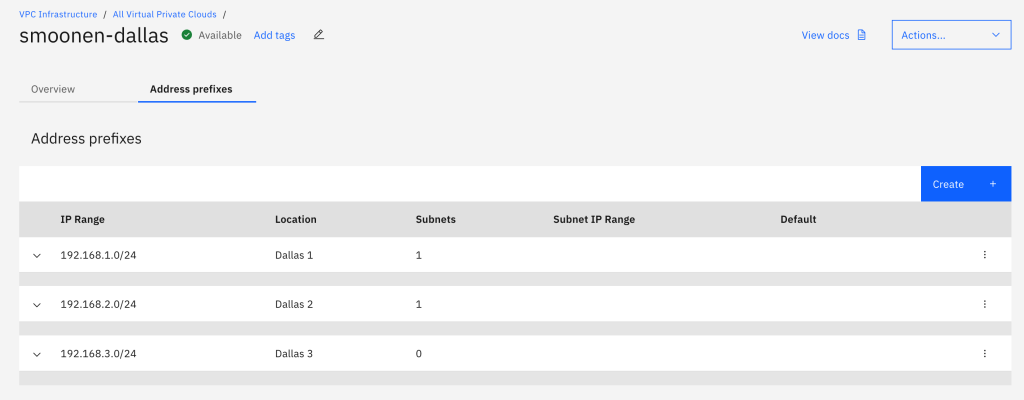

Next, navigate to your VPC and create address prefixes of your choice:

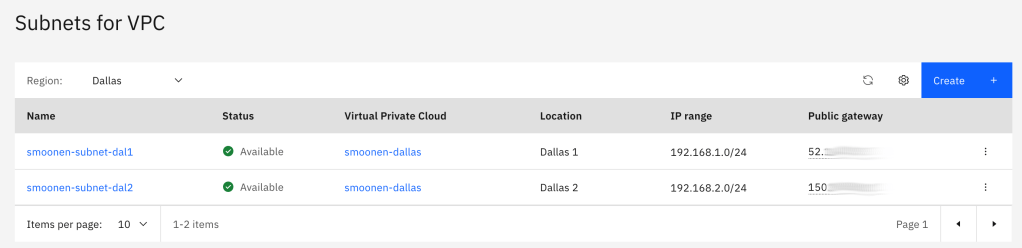

In order to create subnets, you must navigate away from the VPC to the subnet page. In our case, since we are hosting workloads in only two zones, we had a need only for two subnets:

Next, create four virtual server instances (VSIs), two in each zone. Within each zone, one VSI will serve as the application and the other will serve as a virtual router. For the purposes of this example we use RedHat Enterprise Linux 8.

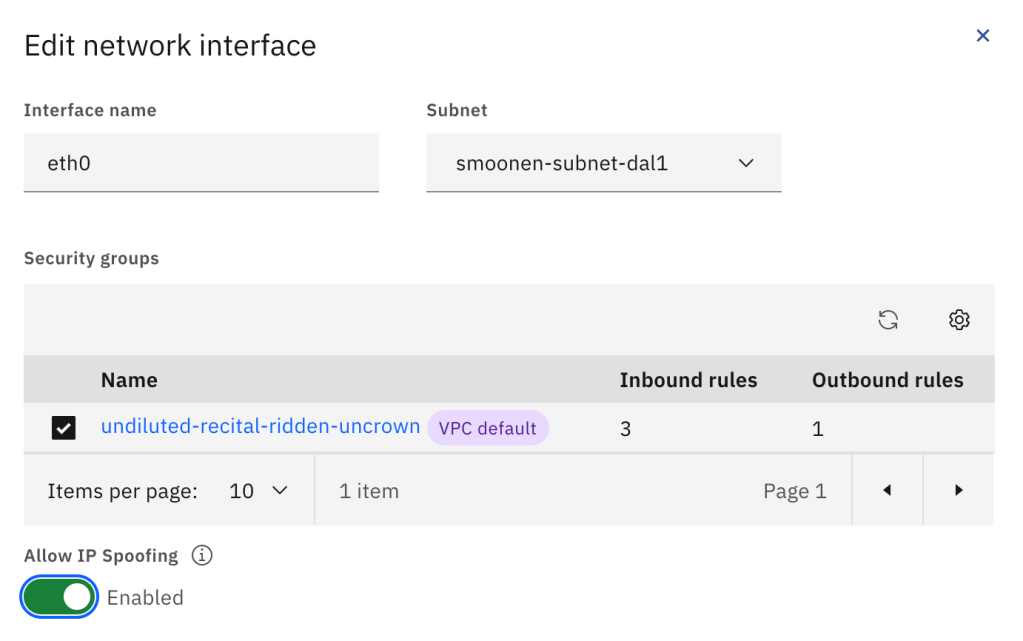

You need to modify the router VSI network interfaces, either when you create it or afterwards, to enable IP spoofing. This will allow the routers to route traffic other than their own IP address:

Be sure to update the operating system packages and reboot each VSI.

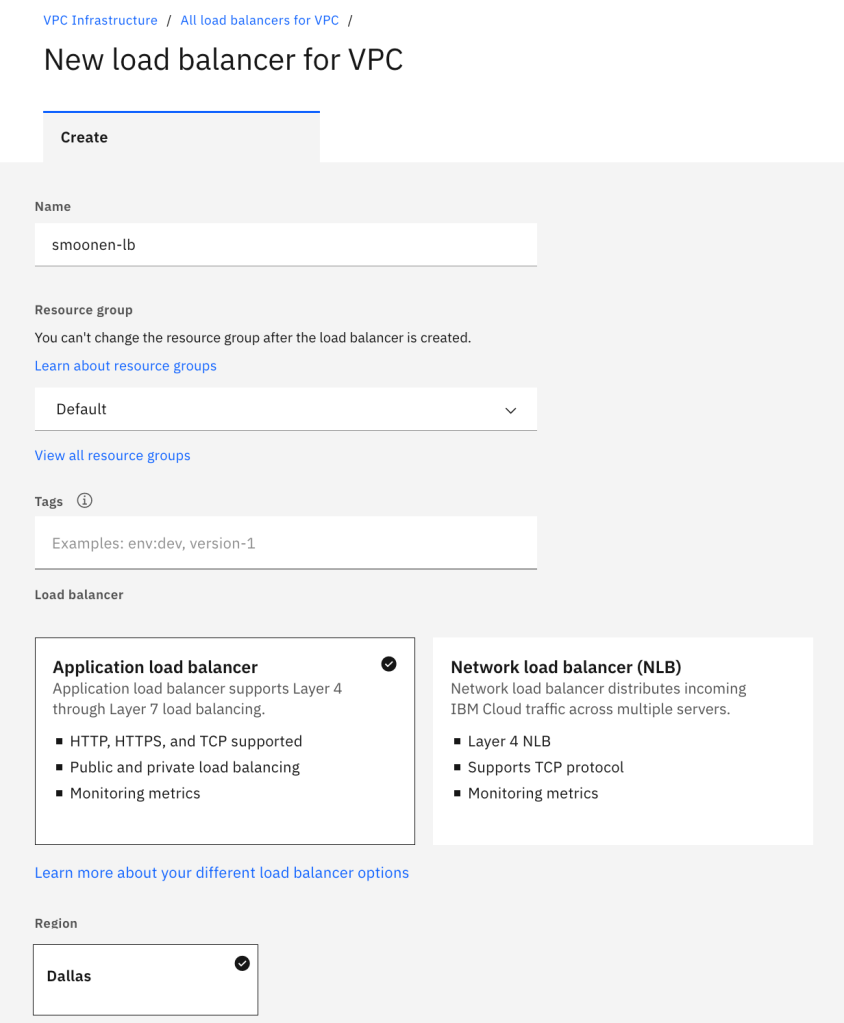

Finally, create an IBM Cloud load balancer instance pointing to each of your application VSIs. Because this is a multi-zone load balancer you must use the DNS-based application load balancer:

Deploy your Cloud Director resources

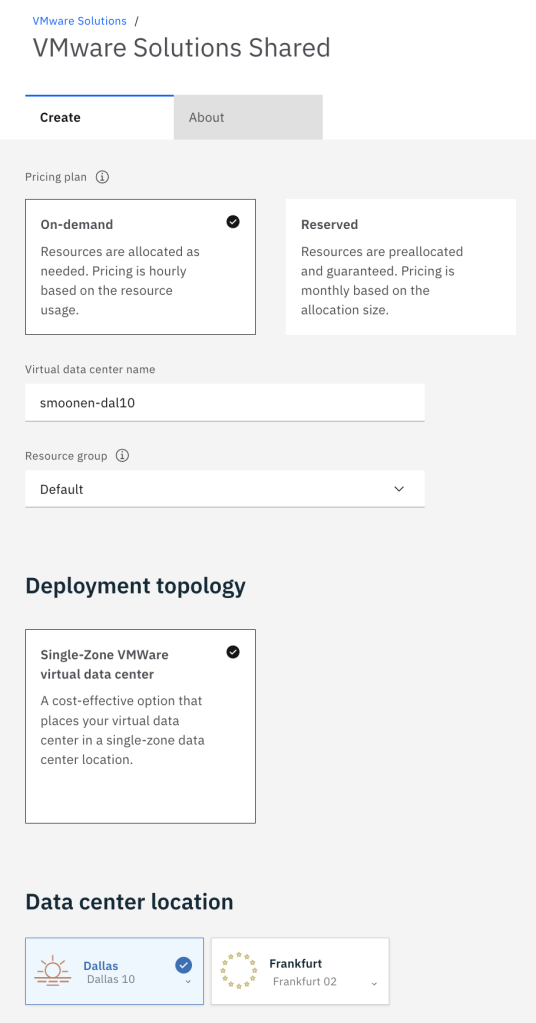

Next create three VMware Solutions Shared virtual data centers (VDCs). Note that while VPC availability zones are named 1, 2, and 3, VDC availability zones are named according to the IBM Cloud classic infrastructure data center names. Thus, we will deploy to Dallas 10, 12, and 13, which correspond to the three VDC zones:

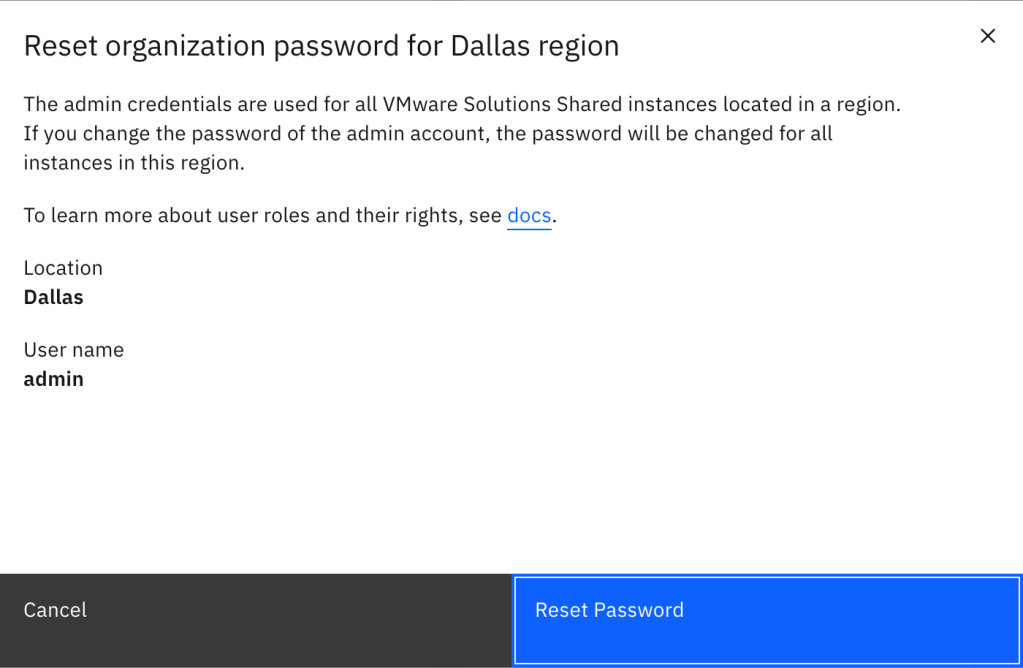

After creating your three virtual data centers, you need to view any one of these VDCs and reset the administrator password to gain access to the single Cloud Director organization for your account. Using this administrator account you can create additional users and optionally integrate with your own SSO provider:

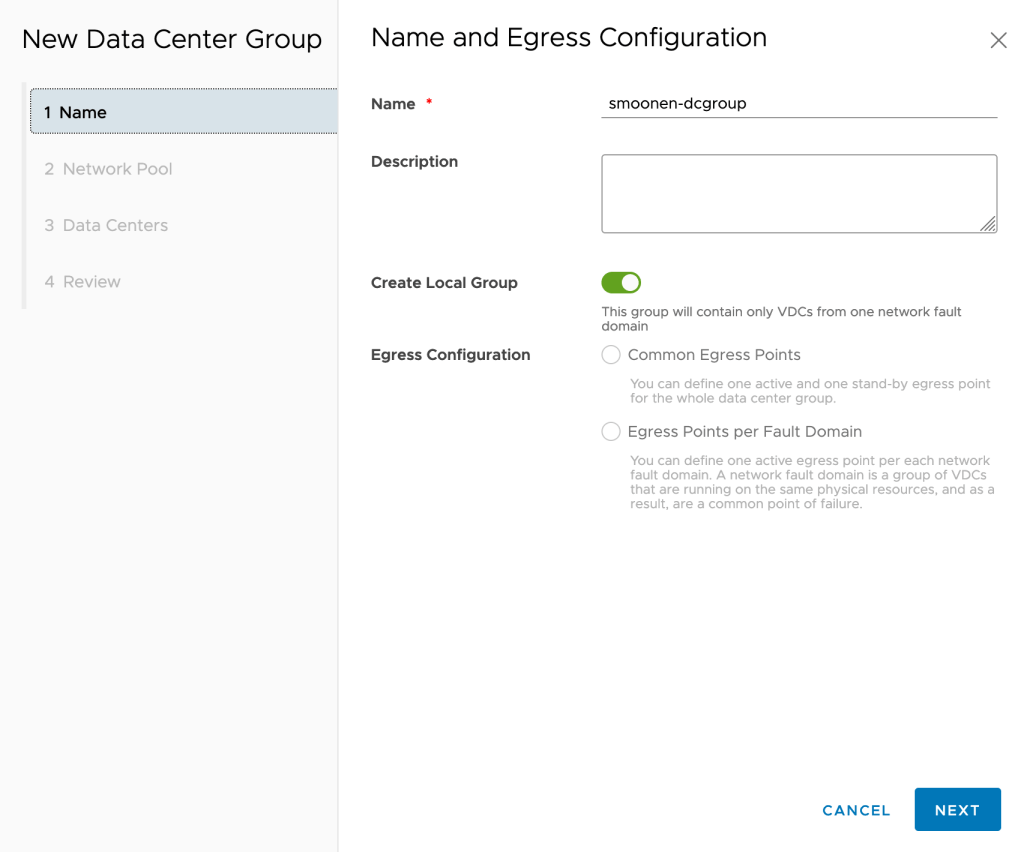

Next, use these credentials to login to the Cloud Director console. We will create a Data Center Group and assign all three of our VDCs to it so that they have a shared stretch network and network egress. Navigate to Data Centers | Data Center Groups and create a new data center group. Ensure that you select the “Create Local Group” option; although the VDCs are actually in different availability zones, they are designated in the same fault domain from a Cloud Director perspective and we will use active-standby routing. There is only one network pool available for you to use:

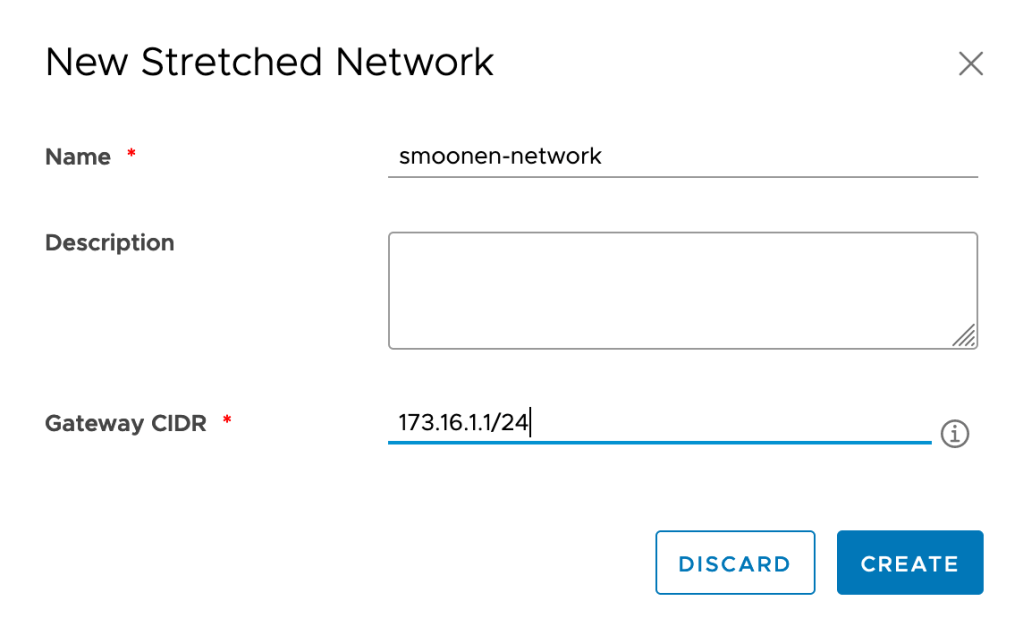

After creating the data center group, create a stretched network that will be shared by all three VDCs:

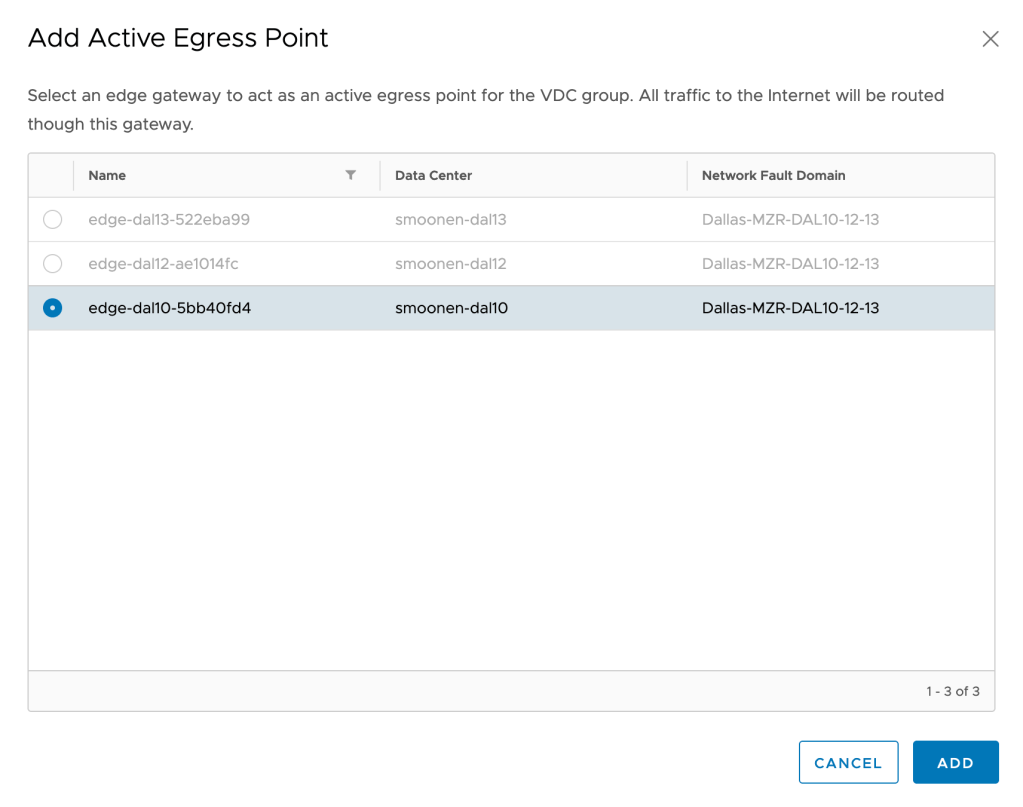

Add your DAL10 edge as the active egress point, and your DAL12 edge as the passive egress point:

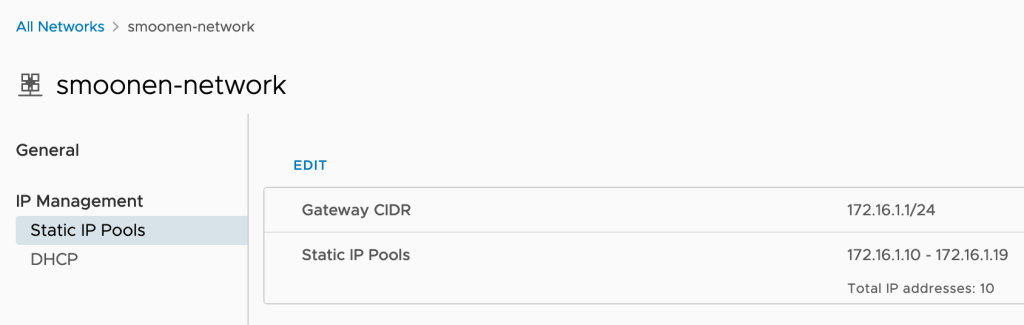

Next, navigate to each of your VDCs, view the stretched network, and create an IP pool for each VDC that is a subset of your stretched network:

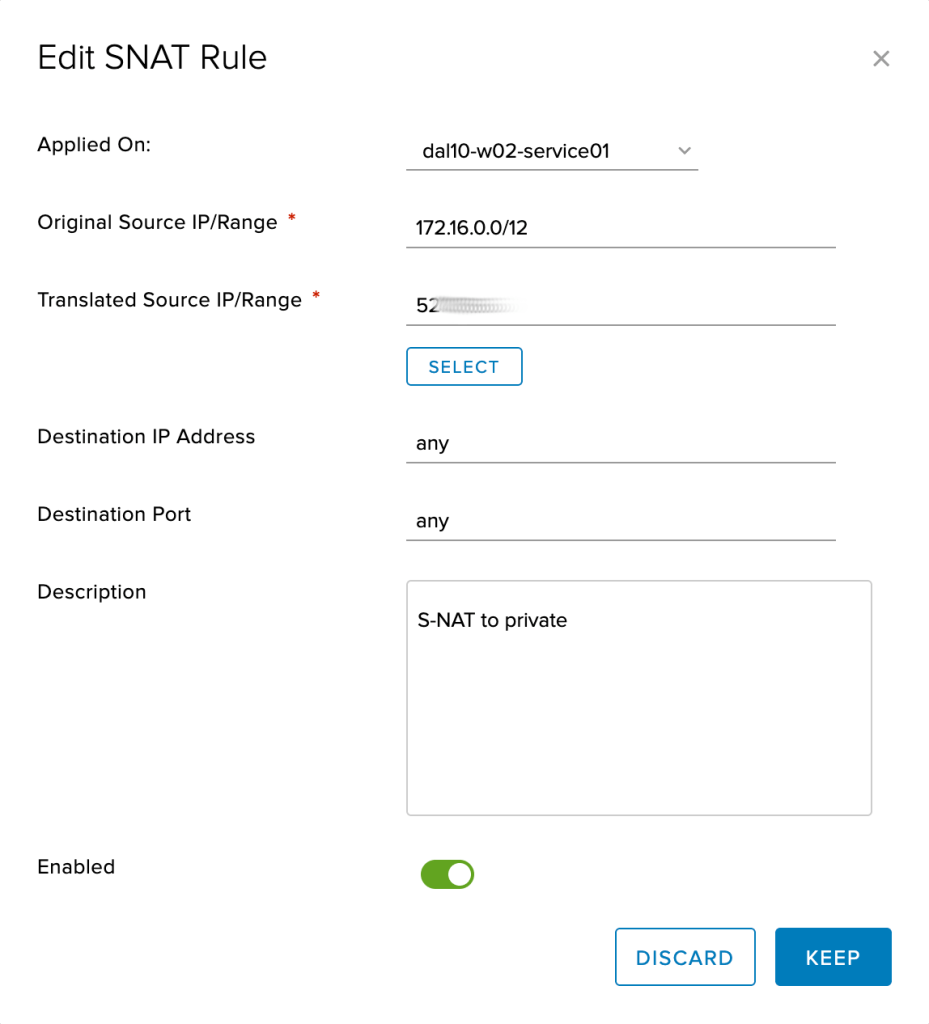

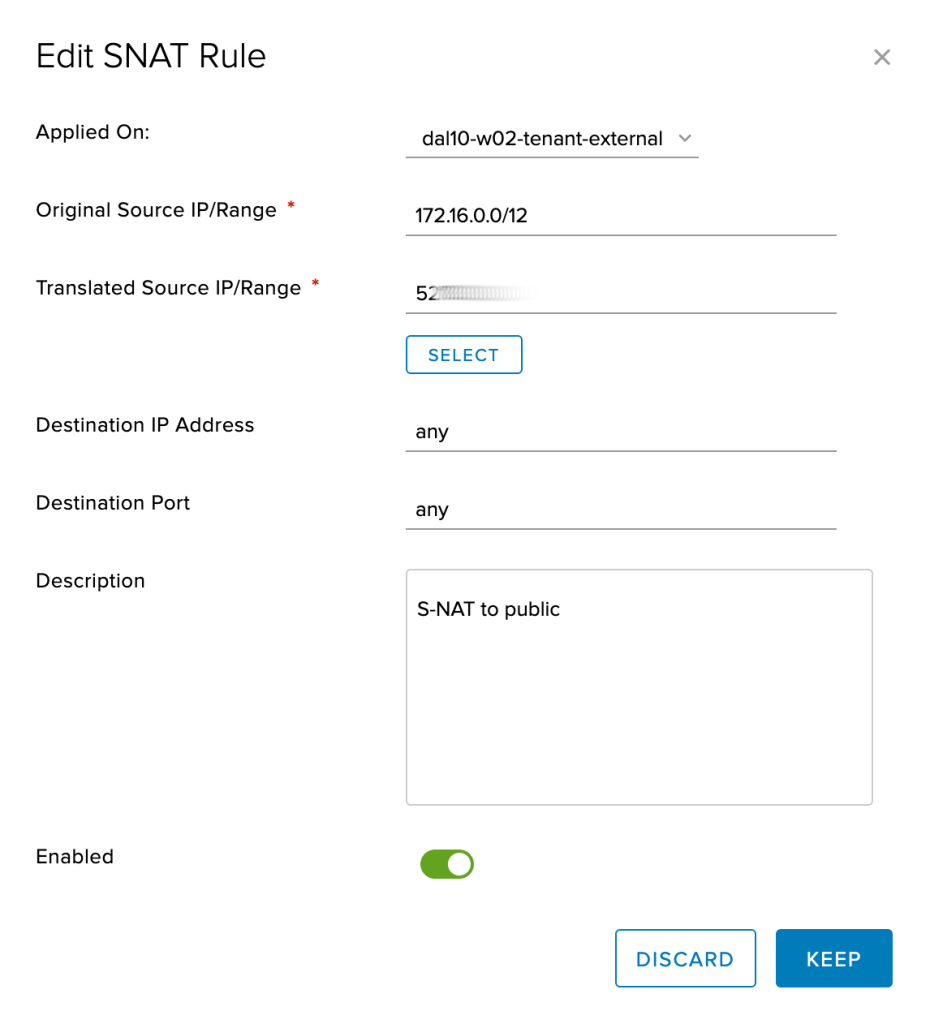

Next, configure your DAL10 and DAL12 edges (see IBM Cloud docs for details) to allow and to SNAT egress traffic from your VPC to the IBM Cloud service network (e.g., for DNS and RedHat Satellite) and to the public network. If you wish to DNAT traffic from the public internet to reach your virtual machines, keep in mind that the DAL10 edge is the active edge and you should not use DAL12 for ingress except in case of DAL10 failure.

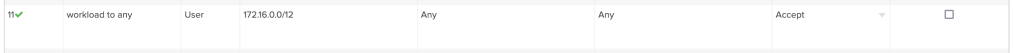

Minimally you want your workload to reach the IBM private service network which includes 52.117.132.0/24 and 161.26.0.0/16. Because we are using private network endpoints (PNEs) you also need to permit 166.9.0.0/15; this address range is also used by any other IBM Cloud services offering private endpoints. For this example I simply configured the edge firewalls to permit all outbound traffic to both private and public:

You must configure an SNAT rule for the private service network (note that this rule is created on the service interface):

and, if needed, an SNAT rule for the public network (note that this rule is created on the external interface):

Next, create the virtual machines that will serve as your database, one in each VDC. For the purposes of this example, we deployed RHEL 8 virtual machines from the provided templates and connected them to IBM Cloud’s Satellite server following the directions in the /etc/motd file. There are a few caveats to the deployment:

- You should connect the virtual machine interfaces to the stretched network before starting them so that the network customization configures their IP address. Choose an IP address from the pool you created earlier.

- At first power-on, you should “power on and force recustomization;” afterwards you can view the root password from the customization properties.

- When using a stretched network, customization does not set the DNS settings for your virtual machines. For RHEL we entered the IBM Cloud DNS servers into

/etc/sysconfig/network-scripts/ifcfg-ens192as follows:

DNS1=161.26.0.10

DNS2=161.26.0.11Configure BGP over IPsec connectivity between VCD and VPC

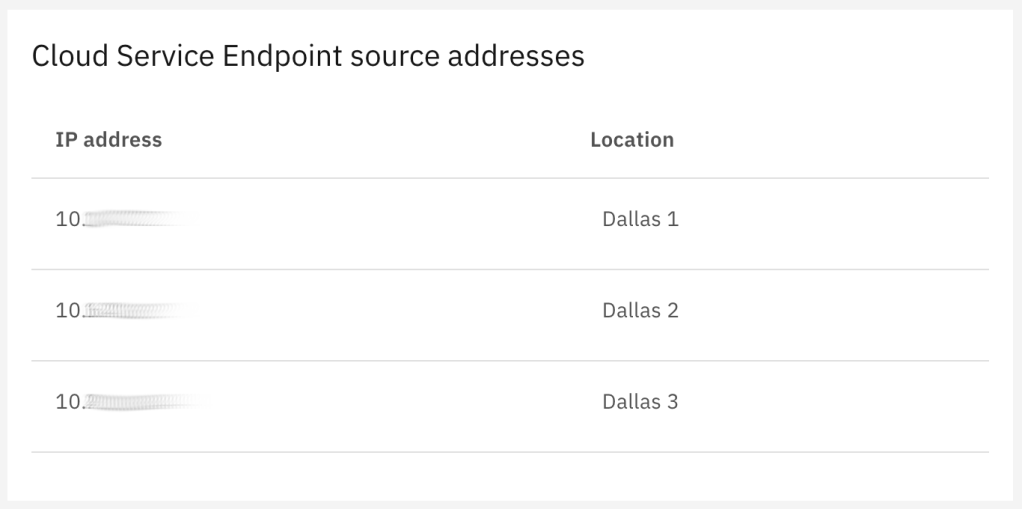

In order to expose your Cloud Director edges to your VPC using the IBM Cloud private network, you must create private network endpoints (PNEs) for your DAL10 and DAL12 VDCs. First, in the IBM Cloud console, view your VPC details. A panel on that page lists the “Cloud Service Endpoint service addresses” which are addresses not visible to your VPC but which are the addresses representing your VPC that you will need to permit to access your PNEs. Take note of these addresses:

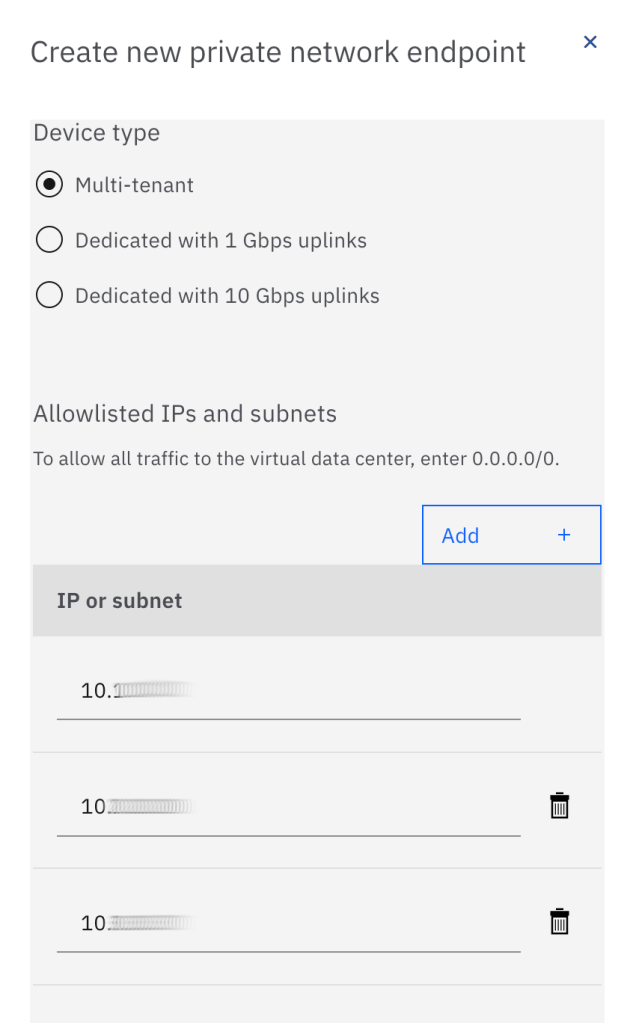

Now, navigate to your DAL10 and DAL12 VDCs in the IBM Cloud console and click “Create a private network endpoint.” Select the device type of your choice and enter the IP addresses you noted above:

The PNE may take some time to create as it is an operator assisted activity. After it has been successfully created, you will need to create a second PNE in each of the two zones. The reason we need to create a second PNE is that the PNE hides the source IP address of incoming connections, so we cannot configure policies for two different IPsec tunnels using the same PNE. The IBM Cloud console does not allow you to create a second PNE automatically, so you must open a support ticket to the VMware Solutions team. Phrase your ticket as follows:

Hi, I have already created a PNE for my VCD edges edge-dal10-xxxxxxxx and edge-dal12-yyyyyyyy. Please create a second service IP for each of these edges with an additional PNE for each edge. Please use the same whitelist for the existing PNEs. Thank you!

Note that in our example we are connecting only Dallas 1 and Dallas 2 zones from our VPC to Cloud Director. If you wanted to connect Dallas 3 as well, you would need to request three rather than two PNEs for each of your DAL10 and DAL12 edges.

Now we need to configure each of our two NSX edges and our two VPC routers to have dual BGP over IPsec connections to their peers. You need to select which PNE will be used for each VPC router connection.

On the VCD side, the IPsec VPN site configuration for one of the VPC routers looks as follows. In this case, the 52.x address is the PNE’s “service network IP” and the 166.x address is the PNE’s “private network IP:”

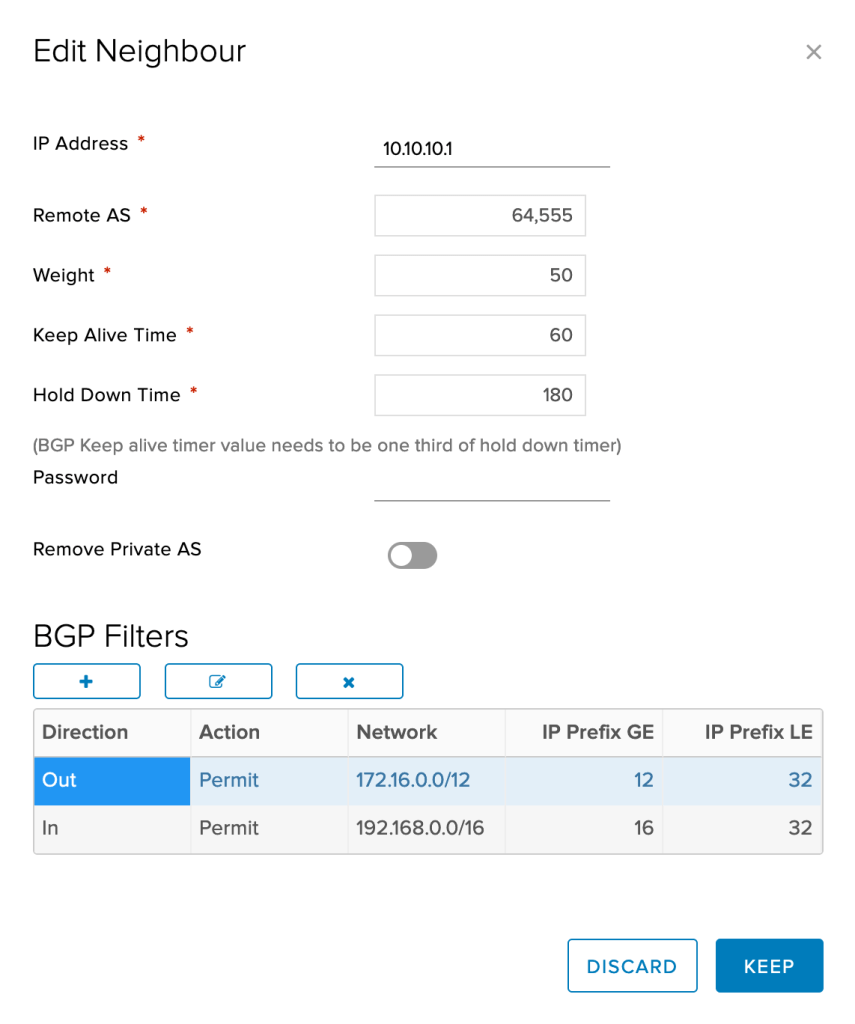

And the corresponding BGP configuration is as follows:

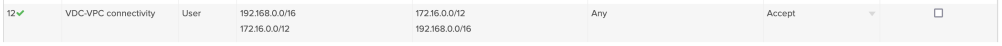

Finally, you must be sure to permit the VCD and VPC interconnectivity in both edge firewalls:

For the purposes of this example we are using RHEL8 VSIs as simple routers on the VPC side. First of all, we need to modify /etc/sysctl.conf to allow IP forwarding:

net.ipv4.ip_forward = 1

And then turn it on dynamically:

[root@smoonen-router1 ~]# echo 1 >/proc/sys/net/ipv4/ip_forward

[root@smoonen-router1 ~]#

Next we installed the libreswan package for IKE/IPsec support, and the frr package for BGP support.

In order to use dynamic routing, the IPsec tunnel must be configured using a virtual tunnel interface (VTI). The IPsec configuration for our Dallas 1 router is as follows. The left and leftid values are the address and identity of the router appliance itself. The right value has been obscured; it reflects the address of the VCD edge as known to the router; this is the PNE’s “private network IP.” The rightid value has also been obscured; it reflects the identity of the VCD edge, which we have previously set to the PNE’s “service network IP:”

# Connection to ESG1

conn routed-vpn-esg1

left=192.168.1.4

leftid=192.168.1.4

right=166.9.xx.xx

rightid=52.117.xx.xx

authby=secret

leftsubnet=0.0.0.0/0

rightsubnet=0.0.0.0/0

leftvti=10.10.10.1/30

auto=start

ikev2=insist

ike=aes128-sha256;modp2048

mark=5/0xffffffff

vti-interface=vti01

vti-shared=no

vti-routing=no

# Connection to ESG2

conn routed-vpn-esg2

left=192.168.1.4

leftid=192.168.1.4

right=166.9.yy.yy

rightid=52.117.yy.yy

priority=2000

authby=secret

leftsubnet=0.0.0.0/0

rightsubnet=0.0.0.0/0

leftvti=10.10.10.5/30

auto=start

ikev2=insist

ike=aes128-sha256;modp2048

mark=6/0xffffffff

vti-interface=vti02

vti-shared=no

vti-routing=no

Note that the tunnels use a different mark and VTI interface. Next, in /etc/frr/daemons, enable bgpd:

bgpd=yes

Then define your tunnel interfaces in /etc/frr/zebra.conf; these are the interfaces for our Dallas 1 router:

!

interface vti1

ip address 10.10.10.1/30

ipv6 nd suppress-ra

!

interface vti2

ip address 10.10.10.5/30

ipv6 nd suppress-ra

Finally, configure BGP in /etc/frr/bgpd.conf:

hostname smoonen-router1 router bgp 64555 bgp router-id 10.10.10.1 network 10.10.10.0/30 network 10.10.10.4/30 network 192.168.1.0/24 neighbor 10.10.10.2 remote-as 65010 neighbor 10.10.10.2 route-map RMAP-IN in neighbor 10.10.10.2 route-map RMAP-OUT out neighbor 10.10.10.2 soft-reconfiguration inbound neighbor 10.10.10.2 weight 2 neighbor 10.10.10.6 remote-as 65010 neighbor 10.10.10.6 route-map RMAP-IN in neighbor 10.10.10.6 route-map RMAP-OUT out neighbor 10.10.10.6 soft-reconfiguration inbound neighbor 10.10.10.6 weight 1 ip prefix-list PRFX-VCD seq 5 permit 172.16.0.0/12 le 32 ip prefix-list PRFX-VPC seq 5 permit 192.168.0.0/16 le 32 route-map RMAP-IN permit 10 match ip address prefix-list PRFX-VCD route-map RMAP-OUT permit 10 match ip address prefix-list PRFX-VPC log file /var/log/frr/bgpd.log debug

Taken together, we have configured:

- Cloud Director to use DAL10 as active and DAL12 as standby

- Cloud Director edges will advertise the entire stretch network (172.16.1.0/24) to the VPC routers

- Each VPC router is configured to prefer the DAL10 edge

- Each VPC router will advertise its own zone (192.168.1.0/24 or 192.168.2.0/24) to the Cloud Director edges

Now enable IPsec and FRR:

systemctl start ipsec systemctl enable ipsec ipsec auto --add routed-vpn-esg1 ipsec auto --add routed-vpn-esg2 ipsec auto --up routed-vpn-esg1 ipsec auto --up routed-vpn-esg2 chown frr:frr /etc/frr/bgpd.conf chown frr:frr /etc/frr/staticd.conf systemctl start frr systemctl enable frr

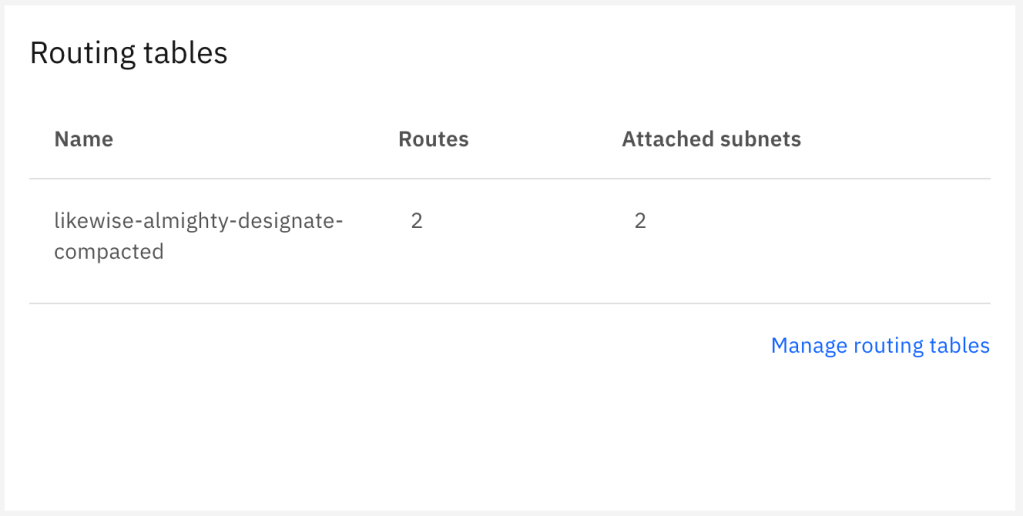

Finally, you need to visit the IBM Cloud console and find the route table configuration for your VPC:

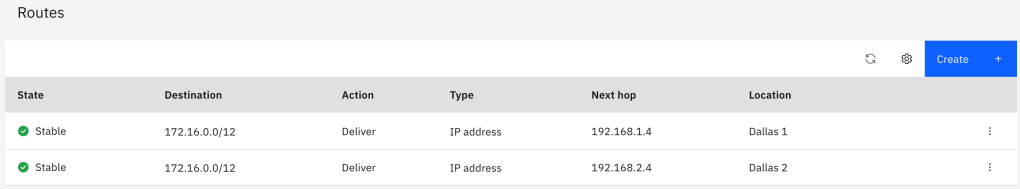

Modify the route table configuration to direct the VCD networks to your router VSI in each zone. Remember that for this example we are hosting applications only in two zones:

After the tunnel is up and the initial BGP exchange complete, you should have bidirectional connectivity between both environments. Here is a ping from one of our application VSIs:

[root@smoonen-application1 ~]# ping -c 3 -I 192.168.1.5 172.16.1.10 PING 172.16.1.10 (172.16.1.10) from 192.168.1.5 : 56(84) bytes of data. 64 bytes from 172.16.1.10: icmp_seq=1 ttl=61 time=3.21 ms 64 bytes from 172.16.1.10: icmp_seq=2 ttl=61 time=2.34 ms 64 bytes from 172.16.1.10: icmp_seq=3 ttl=61 time=2.87 ms --- 172.16.1.10 ping statistics --- 3 packets transmitted, 3 received, 0% packet loss, time 2003ms rtt min/avg/max/mdev = 2.344/2.809/3.210/0.356 ms [root@smoonen-application1 ~]#

We have not tuned BGP, but in spite of this, if we disable BGP on the DAL10 edge (this effectively severs both its connection to the stretched network and its connection to VPC), we see that the connectivity from the VPC fails over to the DAL12 edge:

64 bytes from 172.16.1.10: icmp_seq=16 ttl=61 time=2.51 ms 64 bytes from 172.16.1.10: icmp_seq=17 ttl=61 time=16.9 ms 64 bytes from 172.16.1.10: icmp_seq=18 ttl=61 time=2.63 ms 64 bytes from 172.16.1.10: icmp_seq=137 ttl=61 time=8.52 ms 64 bytes from 172.16.1.10: icmp_seq=138 ttl=61 time=6.06 ms 64 bytes from 172.16.1.10: icmp_seq=139 ttl=61 time=5.07 ms

Conclusion

We have successfully established bidirectional connectivity over the IBM Cloud private network between VMware Cloud Director and IBM Cloud VPC using BGP over IPsec.

As described above, it is possible to extend this solution by deploying a router appliance in the third VPC availability zone, in which case you would need to deploy two more PNEs, one for each of your VCD edges. Also, you will need additional PNEs if you deploy more than one router appliance into each zone for HA. Thus, you could require up to twelve PNEs (two router appliances in each of three zones, each of which has a connection to two VCD edges).

Many thanks to Mike Wiles and Jim Robbins for their assistance in developing this solution.